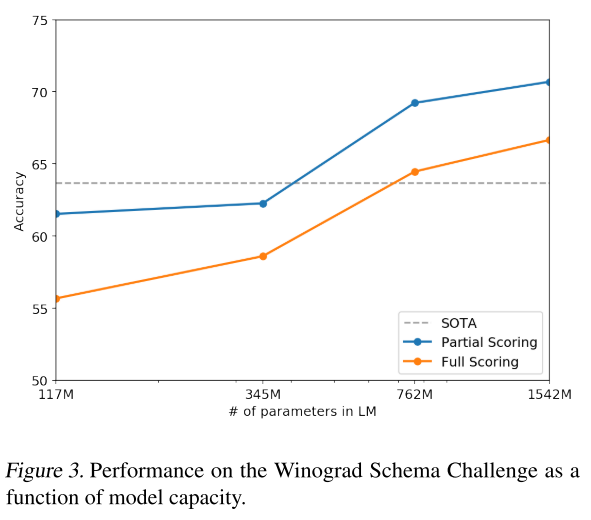

Then our communication skills and theories of mind allow us to share our ideas. In particular, the skill of abstraction allows us to extract common structure from different situations, which allows us to understand them much more efficiently than by learning about them one by one. In our case, we were fortunate that those cognitive skills were not too specific to tasks in the ancestral environment, but were rather very general skills. We can perform well on the latter category only by reusing the cognitive skills and knowledge that we gained previously. However, the key point is that almost all of this evolutionary and childhood learning occurred on different tasks from the economically useful ones we perform as adults. As individuals, we were also “trained” during our childhoods to fine-tune those skills to understand spoken and written language and to possess detailed knowledge about modern society. As a species, we were “trained” by evolution to have cognitive skills including rapid learning capabilities sensory and motor processing and social skills. We can also see the potential of the generalisation-based approach by looking at how humans developed. The field of meta-learning aims towards a similar goal. I think this provides a good example of how an AI could develop cognitive skills (in this case, an understanding of the syntax and semantics of language) which generalise to a range of novel tasks. Its successor, GPT-3, has displayed a range of even more impressive behaviour. GPT-2 was first trained on the task of predicting the next word in a corpus, and then achieved state of the art results on many other language tasks, without any task-specific fine-tuning! This was a clear change from previous approaches to natural language processing, which only scored well when trained to do specific tasks on specific datasets. In Reframing Superintelligence, Drexler argues that our current task-based approach will scale up to allow superhuman performance on a range of complex tasks (although I’m skeptical of this claim).Īn example of the generalisation-based approach can be found in large language models like GPT-2 and GPT-3. Meanwhile our current reinforcement learning algorithms, although powerful, produce agents that are only able to perform well on specific tasks at which they have a lot of experience - Starcraft, DOTA, Go, and so on.

#FINETUNE GPT2 HOW TO#

Similarly, computers are powerful and flexible tools - but even though they can process arbitrarily many different inputs, detailed instructions for how to do that processing needs to be individually written to build each piece of software. The task-based approach is analogous to how humans harnessed electricity: while electricity is a powerful and general technology, we still need to design specific ways to apply it to each task. The key distinction I’ll draw in this section is between agents that understand how to do well at many tasks because they have been specifically optimised for each task (which I’ll call the task-based approach to AI), versus agents which can understand new tasks with little or no task-specific training, by generalising from previous experience (the generalisation-based approach).

We can start with Legg’s well-known definition, which identifies intelligence as the ability to do well on a broad range of cognitive tasks.

In order to understand superintelligence, we should first characterise what we mean by intelligence.